How we turn competitive intelligence into a flexible insight pipeline

“AI can help — but only if it understands context, relationships and intent.”

AI-driven competitive intelligence isn’t about throwing a large language model at a pile of articles and asking for a summary. That only scratches the surface.

To generate real insight, the system needs structure, memory and purpose. That’s why we designed our solution around agent-based AI, a graph-based data model and a fixed insight pipeline.

Agent-based behaviour instead of one generic model

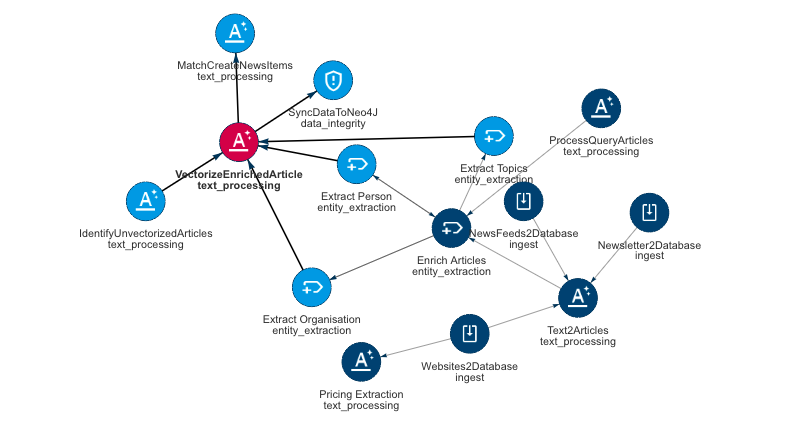

Rather than relying on a single, all-purpose AI model, we work with agents that can use LLM models, third party -api’s and other analysing techniques to create specific insights.

Each agent has therefore a specific role. Some agents continuously monitor selected sources. Others assess relevance and impact. Additional agents cluster information by competitor, theme or market segment, and connect new signals to historical developments.

This agentic behaviour allows the system to do more than read — it interprets. It understands that multiple small updates may together signal a strategic shift, or that a major announcement may not actually change much in practice.

Agentic pipeline design: Example of a flexible pipeline with AI-agents

Scaling AI-driven competitive intelligence with Neo4j

Competitive intelligence is fundamentally relational. Competitors, products, prices, campaigns, markets and news events are all connected.

That’s why we store our data in a vectorised Neo4j graph database.The graph structure makes relationships explicit: which competitor is linked to which product, which pricing move aligns with which campaign, which news items relate to the same strategic theme.

Vectorisation adds a semantic layer on top. Instead of searching for exact keywords, the system retrieves information based on meaning and context. This makes it possible to surface relevant insights even when the wording differs across sources.

Vectorisation adds a semantic layer on top

A flexible pipeline from data to insight

All outputs follow the same pipeline structure:

First, we define the goal — what question are we trying to answer?

Then we establish context, such as market scope, competitors and historical reference points.

Next comes data collection, followed by analysis to detect patterns, trends and anomalies.

Only then do we generate insights, which are finally translated into a clear presentation.

This structure ensures that insights are consistent, explainable and reproducible — not just interesting, but defensible.

Our own LLM infrastructure

We operate on our own LLM server and are currently testing multiple models side by side. This gives us full control over data handling, performance and model selection per use case.

More details on this later — this part of the stack is evolving rapidly.

Technology in service of clarity

The technology is not the end goal.The goal is faster, sharper and more reliable competitive insight.AI gets you to insight faster, but insights are engineered not generated.People decide what it means.

If you’re curious to see how this works in practice, we’re happy to show you around, let’s talk!

Contact us or send an email to info@kentivo.com

Recent Posts

Reach Account-Based Marketing perfection with AI

While Account-Based Marketing (ABM) may appear to be a natural evolution of targeted marketing, there’s much more...

AI Agents, the logical evolution that is hyped

Agents are the latest buzzword in AI, often positioned as the next evolution beyond Generative AI (GenAI). The concept...

Kentivo Group acquires Media Digitaal B.V. (MDInfo)

The Kentivo Group of companies is delighted to announce the acquisition of MediaDigitaal B.V. in Amsterdam, The...